One of the most important e-mails that hits our Inbox on a weekly basis unless there’s a break in there is the Veeam R&D Forums Digest by Anton Gostev.

I’ve mentioned it before but this week’s post is especially important so it’s below verbatim.

To subscribe:

- Sign into the Veeam R&D Forums

- Top right corner

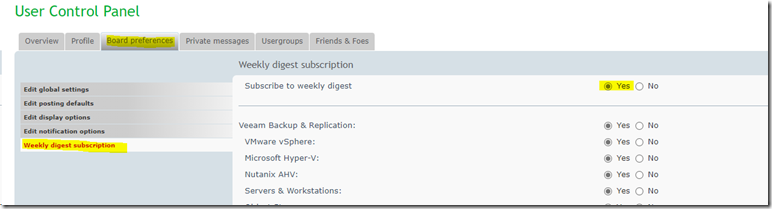

- Board Preferences

- Weekly digest subscription

- Subscribe to weekly digest: Yes

THE WORD FROM GOSTEV

[QUOTE]

I’ve spent many years now advocating for the usage of air-gapped (offline) or immutable backup storage in these newsletters – and I’m happy to see many customers rushing to implement this best practice. Our support big data indicates that the number of such installs nearly doubled in the last less than 2 years, thanks to heightened ransomware concerns in particular. But for me, it also means that I can reduce ringing this same bell and change the tune to covering a bit different scenario: “We have a solid backup strategy. What else can we do now – and what do we do when the attack actually happens“? I hope that sharing what I learnt from the customers who’ve been through this will help better prepare everyone to face the same, as unfortunately the reality is such that it’s unavoidable for most of us.

First, do the following as soon as possible:

1. If you do not have a cyber insurance yet, get it now. There are many good reasons to do so, but the most important one is extremely unobvious: without a cyber insurance, you will have a big trouble finding good recovery specialists to help you, as all the decent ones are now employed by the cyber insurance organizations. Just think how bad the staff shortage in the cyber-security industry is since the raise of ransomware, and what level of expertise will you be able to find on a short notice as a result.

2. Think through the reserve communication channels: cell phones and failback email (something completely external like Gmail). You will need something to communicate with vendors like Veeam, but your usual telephony and email systems will likely be down. So ensure that the reserve communication channels are registered with your IT vendors, otherwise you may not even be able to open a support case on behalf of your company.

3. Plan where are you going to restore your environment to following an attack. I’ve now seen it more than once when customers were caught with their pants down by police confiscating their production storage systems as evidence. So they had good backups, but no hardware to restore them to. And since getting new hardware will take forever due to the current supply chain issues, while having a spare hardware just sitting there is not something many can afford, restoring your environment to cloud IaaS may literally be the ONLY option. Veeam enables you to restore directly to AWS/Azure/GCP, and some of our Veeam Cloud Connect service providers can restore your backups into their data center too – but be sure to test the entire process so that there are no surprises. This will also force you to put all the required networking in place and not waste time on this later.

Once you become aware of the on-going attack:

1. Take everything potentially affected offline to minimize damage. While painful, it’s no longer just about your production data being encrypted: hackers may be streaming your critical production data out of the environment, and this will enable them to threaten you with its disclosure later.

2. Open support cases with your critical infrastructure vendors, including Veeam. You will likely need assistance from all of them in due time. When you open a case, do remember to mention how you can be reached. Most folks forget to do this in a heat of the moment, making our SWAT team unable to contact you due to all your systems being down.

3. Do NOT just go restoring your backups. The intrusion usually happens a few weeks before the actual attack, so there’s rarely a point in mass-restoring your most recent backups. Unless you’re fine wasting time restoring your environment to the state where the sleeping ransomware is already present and/or the threat actor already has remote access with high privileges (plus multiple well-hidden fallback options for when you start disabling or changing passwords for known admin accounts).

4. Set realistic expectations with the executive team: full recovery may take a long while. In one recent example of an actual attack, the customer had solid Veeam backups but it took the security specialists assigned by the cyber-insurance company 2 weeks to reconstruct what happened before they even allowed to start restoring those backups! And in particular, they found that ransomware has penetrated the environment for 4 weeks before the actual encryption attack!

5. Only after the attack is fully understood, the recovery of recent backups can start and it will always be staged. First, each machine is restored into a quarantine environment where the actual cleaning from the determined threats takes place. In addition, each machine is scanned by multiple advanced security tools to ensure there are no other sleeping threats. Only after all of this is done, the cleaned up machine state is moved into the production environment. Those of you who know Veeam well are probably jumping out of their seats right now because it all sounds waaaay too familiar and is exactly how our Staged Restore works, right? You’re correct, but…

6. This will be the moment when your earlier backup storage selection process flashes before your eyes, as slow backup storage will not let you do any of that magic. This is why we suggest using small but fast primary backup repositories! Otherwise, in one other recent attack, the customer in the end chose to perform entire VM restore of each VM from their deduplicating backup storage target into an isolated lab with all-flash array first, do the cleaning and scanning there, and then move processed machines to the production storage. Even if this meant effectively performing an entire VM restore twice, it was still way faster than running VMs from backup on dedupe storage. While entire VM restore from dedupe storage is actually quite fast with Veeam, because we use “sequential reads > random writes” approach to get a decent restore performance (as dedupe appliances are optimized for sequential I/O).

This newsletter is getting too big and it’s actually a good time to finish for today, as I think I covered all of the most important items. Next week should be no less interesting though, as I plan to follow up with details on the core infrastructure changes some of the impacted customers decided to implement as a result of the cyber-attack investigation.[/QUOTE]

There is absolutely no doubt here that the above would be of benefit to someone. It’s well worth the read and subscribing to the digest is well worth it.

Philip Elder

Microsoft High Availability MVP

MPECS Inc.

Our Web Site

PowerShell and CMD Guides